Designing for Testability

The same factors that make your software hard to test will also make it hard to maintain. By adding tests, you can find and fix those things, and so the simple fact of having tests will make your software projects more successful.

To begin with, why should we even care about tests? There's two general answers. One is that tests help to ensure your software works the way you intend and expect it to. This is great, but not actually the thing I'm going to focus on here. Instead I'm going to focus on the other reason: the same factors that make your software hard to test will also make it hard to maintain. By adding tests, you can find and fix those things, and so the simple fact of having tests will make your software projects more successful. Basically tests are like vegetables. They keep your software healthy.

This is a write up of a talk I gave at a Women Who Code event in September 2019. It's also a follow up to my previous talk, Unit Testing: How Do I Even?

Design Patterns

First off, why do we care about these patterns? These patterns give structure to your applications. They keep the pace of software development predictable and repeatable. And they make your projects easier to test. The value of testing can't be overstated for your projects. Tests ensure that the software you build does what you intended, and keeps working as expected throughout its development lifecycle. One of the most important ways these design patterns do this, is to enable you to mock out the dependencies of your test and narrowly target specific functionality in each test. It also allows you to isolate your tests. Tests should be isolated from environmental factors (you shouldn't need an internet connection to run unit tests). They should be isolated from each other (it shouldn't matter how many times or in what order your tests execute). And they should be isolated from data dependencies (you shouldn't have to manage a special database for test data).

Repository Pattern

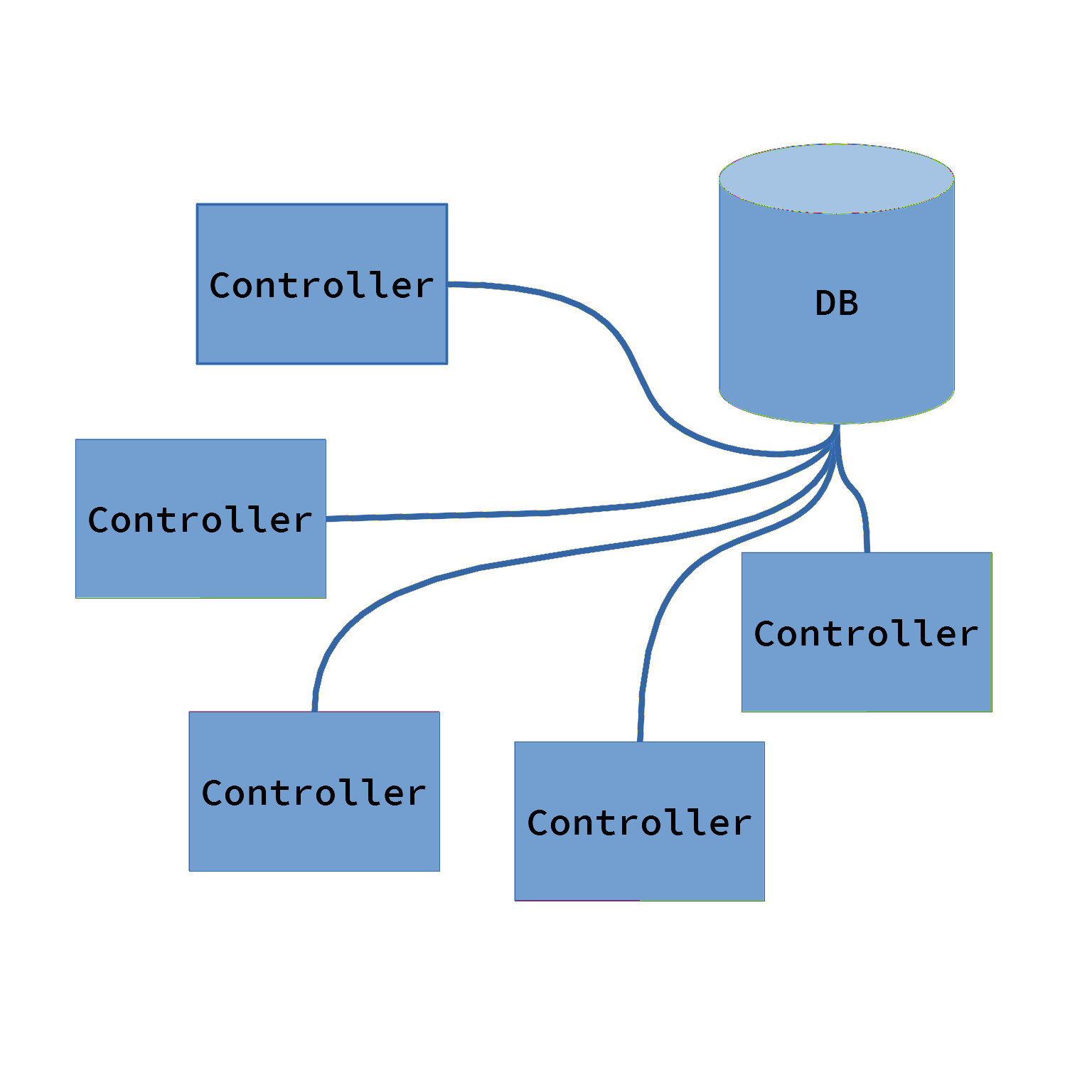

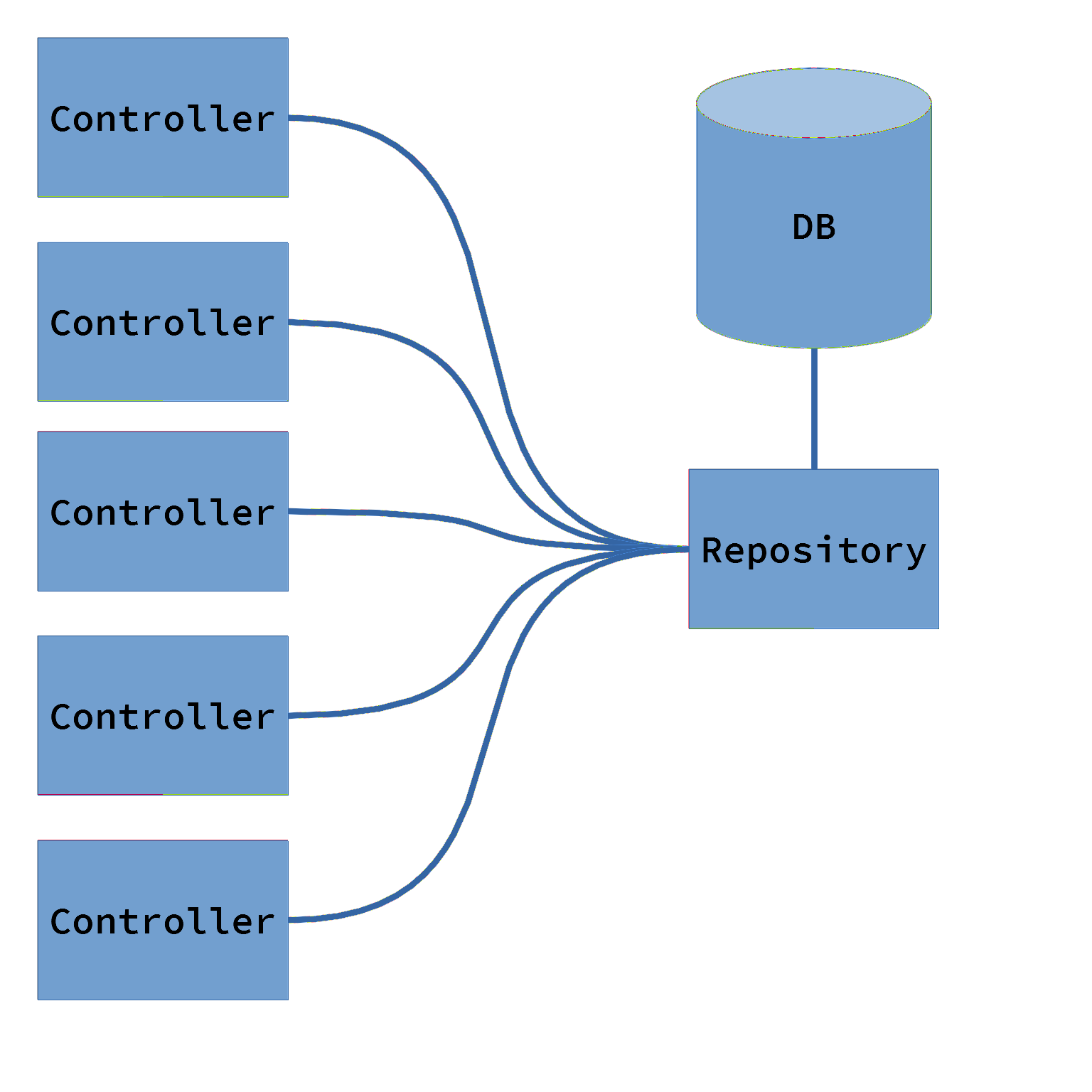

What it is: With the repository pattern, you create a custom class or object which is responsible for managing all access to your data model(s). The repository object hides all of the implementation details of where that data is stored and how the access happens. This allows you to isolate all of the IO work involved in retrieving and storing data within that repository. You can also do most of the error handling and ensuring data integrity here. The rest of the application doesn't need to know any of that, it just needs the data.

Why you should use it: For the purposes of testing, your custom repository object is much easier to mock than the raw network/DB/etc client object that is actually handling the input/output. Creating a mock of that repository allows you to easily and precisely control the data that's provided to the rest of your application during testing. This frees huge portions of your application from being coupled to and depending on a back end system during testing.

This pattern enforces good separation of concerns, and makes refactoring easier. You get complete control over the repository object, and can easily create mocks of it for testing. Those mock repositories can then provide any data you want to the system under test, which will enable testing any scenario you can think of, even scenarios that are hard to reproduce normally.

Dependency Injection

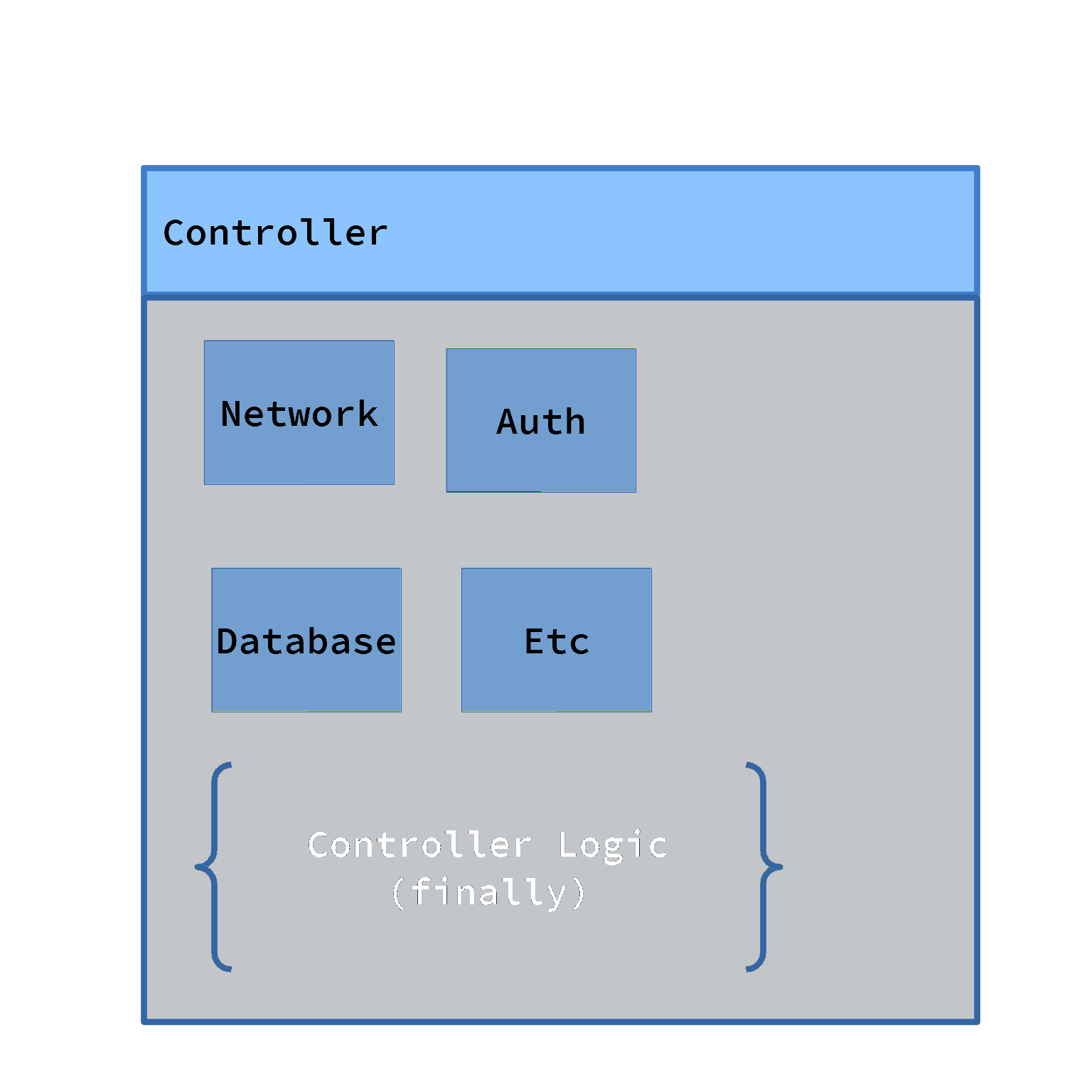

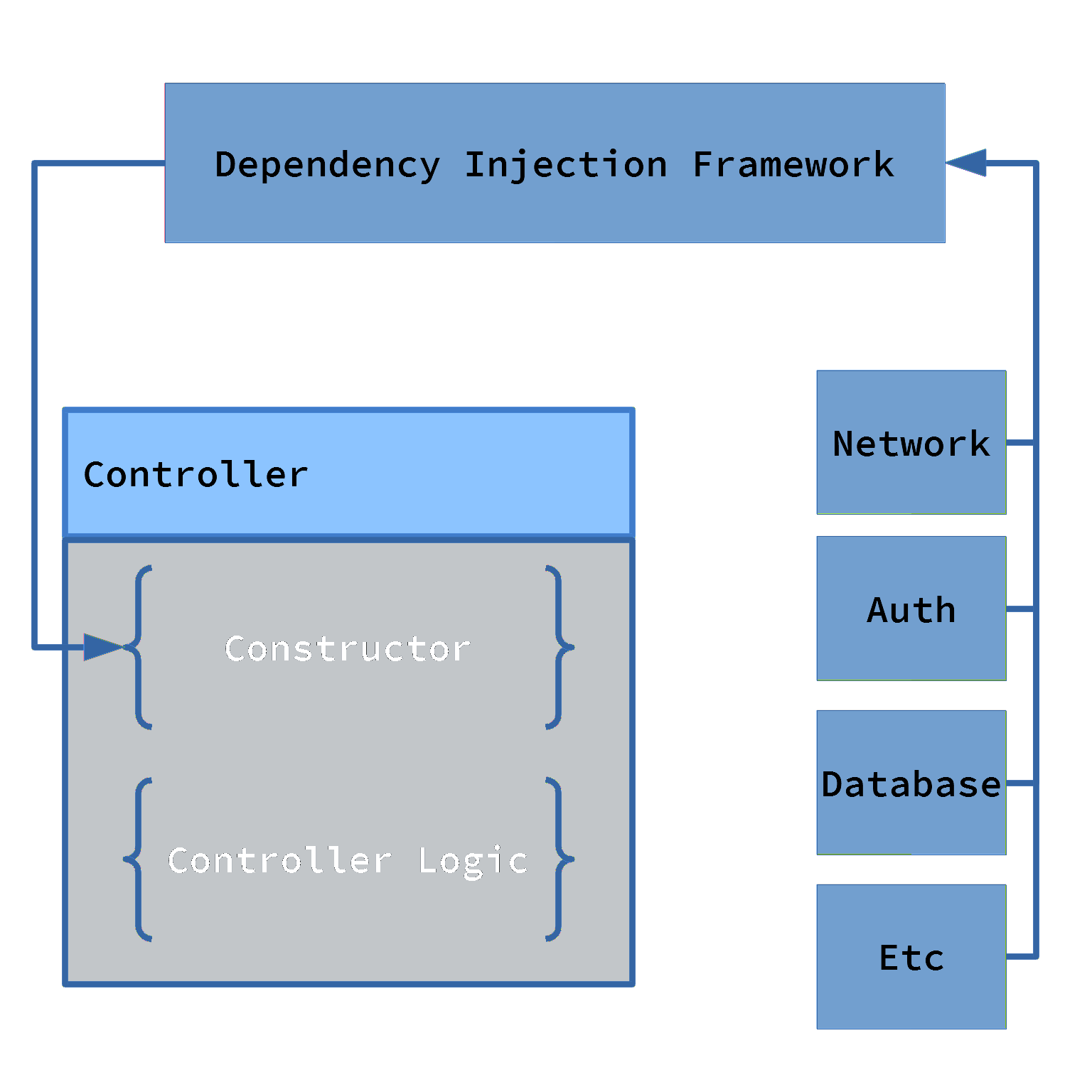

What it is: With Dependency injection, classes are designed so that the things they depend on will be provided to them from the outside, rather than being built in. This encourages better isolation between classes because they no longer need to be responsible for initializing each other. It also encourages and better encapsulation within a class or module because it must be able to completely handle all of its own initialization.

Why you should use it: Dependency injection enforces better separation of concerns. This makes the overall design more consistent and predictable, and it improves maintainability in the long term. Of course from the perspective of testability, dependency injection makes it extremely easy to replace those dependencies with mocked ones.

There are many many frameworks that can be used to manage and simplify using dependency injection in your applications. These are great to have, but they can be pretty difficult to introduce into a mature code base. Fortunately, the DI design pattern doesn't depend on any of these frameworks, and switching to a DI model is usually pretty simple and can be done incrementally. Once your project is using dependency injection consistently, then it's much easier to drop in a framework.

Test First Development

The reason you should perform tests of your software is that it gives you confidence that your software works as intended. All testing is about gaining confidence. The way that works is tests will exercise your software under many different scenarios. And they will keep doing this after each change you make. They ensure that the behavior of your software can't change unexpectedly. So, development patterns are great and useful, but they don't actually accomplish that goal on their own. They help you build your apps in a way that can be tested. But then you have to actually test it. To ensure that your tests actually get written and executed, the ideal strategy is to write them first. Write them before you write the application code. And once they're written, execute them early and often.

Test Driven Development

Test driven development is the part of this formula where you actually write the tests. And in particular, you really do write the tests before you write the application code. You might have heard this described as TDD, BDD, ATDD, or something similar. Those are all just different styles of the same basic idea: write tests, then write the code the tests are testing. There are two main reasons to do things this way. For one, it ensures that tests actually get written. If they come first, then you can't get lazy and skip them at the end. The other is that it guarantees your application code will actually be testable. The patterns you use to develop it play a part there, but there's more to it than that. TDD will force you to think about how your functions can be executed, and how you can verify the results of that execution. It will encourage a more functional design philosophy and make your design more consistent overall.

The way this is actually accomplished in practice is usually described as Red-Green-Refactor. The first step (red), is when you actually, literally write a test first with nothing for it to test. This test will fail, and thus show up red in your run reports. Then (green) you write the implementation which will satisfy the test and cause your test runs to pass again. Finally (refactor), you improve the the test or write another one so that it more fully captures the requirements. And then the cycle repeats until you feel the work is completed.

Here's a pseudocode example of what that red-green-refactor process actually looks like. This example is for a simple endpoint controller in a restful API.

Should respond with HTTP 200 => Response.send()

Should respond with a JSON record => Response.send(repo.get(1))

Should respond with the correct JSON record => Response.send(repo.get(request.id))

Should respond with 404 when there is no record => Result = repo.get(request.id))

Response.send(Result || 404)

The example begins by creating one test that asserts the controller will return essentially any response. Then a second test asserts a more complete response. Then a more correct response. Finally it ends with handling for an error case.

Continuous Integration

Having tests is great, of course. But they don't do you much good if you don't run them. And it's probably worse than useless if you can't trust them. Continuous Integration is the step in your process where you actually execute your tests and require them to pass. There are many tools to enable this, and many strategies for making it happen. Too many to get into here. But I can get into the general process. First, this should all be automatic, and it should all be unskippable. It is, of course, recommended that as a developer you should be running your tests locally while you do development. But that's hard to enforce and it could be disruptive to mandate it. Instead, the best practice is to connect a testing process into your version control system. Those tests will then run before new changes get included into the main code base. And those tests will be required to pass before the changes are accepted and merged into the main code base. This same process also triggers whatever compile or build actions are required and eventually creates the artifact that you're going to deploy. Artifact here means whatever the result of your build is. An exe, a jar file, a dll, an NPM package, an apk, etc. And that artifact should be what you eventually deploy into your testing and then production systems. This workflow ensures that nothing can be released to customers without having gone through at least some baseline testing.

Testability Features

Up to now, I've focused just on unit tests, and ways to make them faster, easier, and more reliable. But those aren't the only tests, and they can't catch every issue. Other types of testing are important. And other types of testing are harder to do. Testability can and should be a feature of the functional design of an application, just like it for the technical design. So what does that look like? Again, it depends on the nature of the application. But test configurations which let a tester jump directly to any point in the applications workflow are a good start. Mechanisms to allow injecting arbitrary data to the application. The ability to import and export application state.

All of that is good. But most or all of that should be disabled in production configurations. In production, what you can do is logging, performance instrumentation, tracing, and similar. Broadly, all of these collectively are usually called Application Performance Management (APM). The goal of APM is observability, and being able to observe your application in detail and on-demand in production enables developers to understand how it works, and to fully support it when it's not working as desired. There are a lot of options for tooling, and unfortunately as a developer they are big expensive enterprise-y things that you probably need your CTO's approval to use. If you have one of these tools, make sure you take full advantage. If you don't, then start building a case for getting one. And in the meantime, logging and tracing and performance instrumentation can all be done piecemeal.

Cover photo by Daniel McCullough