What is a token

AI is meant to seem like magic. But there's no such thing as magic. It's all illusion. So, allow me to spoil that illusion for you.

AI has become hard to talk about. In part, that’s because the term “AI” doesn’t refer to any one thing. It’s mainly a marketing umbrella term for a variety of machine learning and natural language processing techniques (and computer vision, but that’s less relevant to this specific discussion). Prior to 2023, it would have been entirely unremarkable that these methods were in use. These techniques powered your feed on TikTok, Instagram, and virtually every other social network. They drove Netflix recommendations, ad bidding systems, and Google search rankings. It was a small or large part in many other domains, from network routing to payment fraud prevention. We collectively called these things “the algorithm”, and it was a well used tool in a great many toolboxes.

But “the algorithm” was also distant and hard to see. Since that time, those techniques have also come to form the basis of a generation of chatbots that can respond in remarkably adept ways to nearly any prompt we can give them. We call these things AI, and they can seem almost magical. That has contributed to a wave of capital investment and media attention at a scale that’s hard to fathom. This, in turn, can make AI feel revolutionary, and I’ve seen it equated to some of the most impactful technological developments throughout history: the printing press, electricity, the web, and spreadsheets, to name a few. It’s clearly true that this makes a lot of natural language processing more accessible to people, and that is a significant societal development. But it's a development that's buried in an enormous pile of marketing. And all of it is wrapped up in what is, frankly, deceptive product design. That just leaves us with something that seems like magic, and that's not a reasonable basis for evaluation. But there's no such thing as magic. It's all illusion. So, allow me to spoil that illusion for you.

Summary

I’ve tried to make it succinct, but this is still not exactly a short article. So, if you're an AI fan, I’ll save you from spending your tokens on this part and just provide my own summary.

- You can extract a lot of valuable insight from statistical analysis of large bodies of text

- That analysis happens in a long pipeline of progressive stages. Each one influences the ones that follow it

- Many of those stages are essentially throwing out information about the text. This is necessary to find patterns and correlations, as well as to manage the otherwise impossible cardinality of natural language

- This analysis is very useful to build things like classifiers, information retrieval, recommenders, and self-guided discovery

- The underlying data structures can also drive generative tools. But this comes at the end of a long chain of reductive normalization of the data set. That is reflected in the results

- There are cases where that's good enough. But, there’s no clear separation between whether it is or not. And there’s no indication in the results either way. That assessment rests on the operator’s expertise and judgement, and, critically, on their attention as well

What is a token?

I think it’s important to start at the beginning so that we have a common understanding of all the things built on top of that. I think of tokens as that beginning. Tokens are sort of the atomic unit of natural language processing (NLP) algorithms. Every application of natural language processing operates on a tokenized representation of text. Tokens are conceptually similar to words, and there is a lot of overlap between the concepts. But, there are at least 900,000 English words, not counting words in other languages that English users frequently borrow, or the new words and variations of words that people are constantly creating. It also ignores various ways to capitalize, and to (mis)spell them, or to combine them as compound words for distinct concepts. That’s a range of data where cardinality concerns come into play. And at that level of granularity, you also start losing the forest among the trees. The point of NLP methods is to extract patterns of usage, and if the library of atomic units you’re examining is that large, it’s likely that many of those units will appear zero or one time within any corpus of training data you might use. That makes it close to impossible to do any meaningful analysis of those tokens. And it makes it actually impossible to have useful handling of novel data.

There are a variety of classic techniques to reduce that cardinality. These are things like word stemming and lemmatization (reducing a word to its base form and tense, without prefix or suffix), case folding (removing alternate capitalizations of words), or stop word filtering (removing extremely common words like “the” or “and”). These are fundamentally efforts to normalize language to a sort of platonic ideal of itself. And they are not without drawbacks. The most interesting and informative parts of a text are the parts of it that are not normal. And so normalization risks destroying that information before you can ever analyze it. For example, consider that a rock band is a generic term for a musical group that performs in a certain genre. Unless, of course, it was normalized away from Rock Band, the extremely popular video game from 2007. Those are related but distinct concepts, and you can lose a lot of information depending on how it's tokenized.

An alternative is to tokenize text into n-grams, which are blocks of characters that don’t directly correspond to words. For example, a 3-gram tokenization of “directly correspond” would look like [dir, ect, ly, cor, res, pon, d]. Clearly, that can handle any arbitrary text, so long as it’s in a language you can break up into individual characters. There are ~185,000 possible combinations of English language 3-grams (including a placeholder for empty characters), and considerably fewer that occur in actual use. So you can see how this makes the question of cardinality considerably more tractable. But it also loses some context. For instance, it obscures that Superman is a singular concept, and instead it looks like [Sup, erm, an] in a simple 3-gram tokenization. Through training, the model would likely reestablish some of that correlation, but that’s still working backwards toward a relevance that was plainly obvious to a human in the source text.

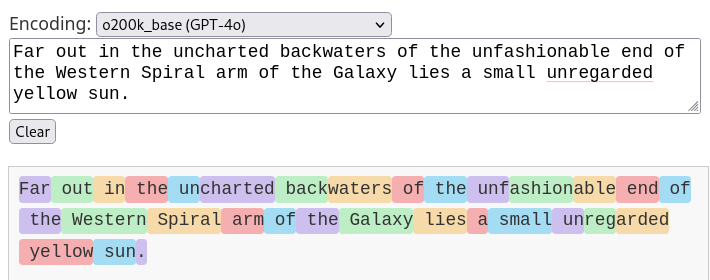

In practice, the large scale models we’re interested in are built on tokenizers that are themselves extensively trained machine learning models. There’s no simple way to characterize how they behave. They perform all of these techniques and more. What that means in practice is easier to grasp by example. So, with apologies to Douglas Adams, let’s consider the opening line of Hitchhiker's Guide to the Galaxy:

As you can see, most of the tokens are very intuitive. They’re usually single words. In the case of [un, charted], it’s a prefix and a base word. [back, waters] is two tokens for a compound word; also very intuitive. But then [unf, ashion, able] and [un, reg, arded] seem surprising by comparison. I couldn’t tell you why GPT-4o tokenizes those words that way. But that’s fine. The point of this isn’t to build a tokenizer, but rather to help us think about what tokens are. For our purposes, tokens are units of linguistic analysis. They are mostly word-like constructs, and sometimes sub-word constructs or punctuation. These constructs form a compressed and normalized language data space.

How do we go from tokens to structured analysis of documents?

Once you’ve converted a document into a series of tokens, you can start to do some statistics with those tokens. To start with, you count them up. A simple count gives you a token frequency distribution across the document. You can compare that to the frequency distribution of the entire corpus, to gain some insight into what distinguishes one document from the average. And because the non-normal qualities of any given text are the most information rich, you would want to invert the frequency for comparison. This way, when some bit of text uses uncommon terms more often than usual, that becomes immediately apparent. This is called the inverse document frequency-term frequency (IDF-TF), and it forms a critical part of NLP methods. For example, across all English writing, the term “token” is unlikely to be used even once. But I’ve used it 23 times in 1446 words, as of this sentence. An NLP pipeline should easily classify this document as being about concepts that are common to natural language processing, based solely on that frequency analysis.

You can also move beyond simple token frequencies, and analyze token n-gram frequency. That is, 2-, 3-, or n-groups of tokens in sequence. This begins to tell you not just how often those tokens are used, but how they’re used together. You can start to very easily identify things like prepositional phrases, because they form extremely common word groups like “in a”, “of the”, “to the”, etc. Another easy example are contractions, as the usage of contracted and uncontracted words will closely correspond to each other. All of these token frequencies get bundled up into what we call a bag of words. A bag of words is a vectorized representation of text. As you probably know, a vector is a form of data that encapsulates a direction and a magnitude. It always puts me in mind of physics classes in school, because that’s where they came up earliest and most often for me. But, it’s not a concept that’s unique to physics, clearly. In a bag of words, every n-gram constitutes one dimension in a multidimensional space. The frequency is then a magnitude in that dimension. If you remember your grade school geometry homework, you probably saw and drew a lot of lines on XY graphs. If you treat those graphs like vectors, X and Y are dimensions, and the point coordinates are the magnitude of the vector. You can compare those vectors using relatively basic trigonometry. One method is to find the euclidean distance. This is exactly the exercise where you find the distance between two points on a graph that you probably did in one of your high school math classes. It’s a little bit more complicated than you likely remember, because there are more than 2 or 3 dimensions, but the math is the same. Another is to find the cosine distance, which discards the absolute magnitude of your vectors to instead compare their relative angles.

With just this level of analysis as a foundation, you can find many, many latent correlations using machine learning methods. In fact, you can likely choose any arbitrary number of correlation factors for a machine learning pipeline to identify and it will. That’s assuming you start with a sufficiently large and diverse training set, of course. Whether those correlations are meaningful or not is another question entirely. You’ve likely heard it said that correlation is not causation. What that means is sometimes things correlate just out of pure coincidence. In large enough datasets, those coincidences are basically guaranteed to occur. In fact, it’s less a question of whether they will occur, and more a question of how often. So, some of the correlations you would find will represent style, or grammar, or conventional wisdom. Others would represent bias, common misconceptions, or active trolling. And still more would represent nothing at all; they would just be clusters of statistical noise.

How do we go from analysis to generation?

At this point, hopefully you have at least a conceptual understanding of how we would take unstructured text and use it to derive some analytic insights. We can determine the frequency of occurrence for word-like things, as well as for sequences of those word-like things. We can also determine similarities between different sequences of those word-like things. We can even deduce stylistic, grammatical, and structural patterns with reasonably good confidence. Based on that, I’m sure you can imagine classifiers that could identify the style of a prolific author, or the form of a 5 paragraph essay. And given that we already have probabilities for how words are used in sequence, I’m sure you can also imagine turning that around and using it to predict text, instead of just classifying it. The “GPT” in ChatGPT stands for generative pretrained transformer. That kind of probabilistic word sequencing is, fundamentally, how transformers work. And the crop of chatbots you would think of as AI are all transformers.

Absent any other factor, invoking a transformer would generate a probability distribution [archive link] for one token and then stop. A controller placed on top of the transformer would select from the distribution, and decide whether to request more tokens or not. You’d want one of those tokens to be some kind of terminator, like an end-of-document marker (EOD). That way the transformer could eventually emit an EOD, and the controller would stop requesting more tokens. You could adjust the probability weighting of that EOD token to make your text generator more or less verbose. And if we’re talking about a modern large-scale model, there are likely 100s of billions of other parameters you can tailor to produce different styles, topics, dialects, or even languages. Of course, 100s of billions is an inhuman number of parameters to adjust. You wouldn’t even be able to recognize the qualities they represent in the vast majority of cases. So, the way you would actually make those adjustments is to do some minor retraining, or fine-tuning, of the model using a small set of documents that you expect to be relevant to your use case.

But how does the transformer generate that first token? This is where we start to talk about context. If you requested a token from a transformer with genuinely zero context, you would get back something randomly selected from the weighted distribution of all the tokens in its vocabulary. But that’s kind of useless. In practice, those transformers get primed with a bunch of context up front. Context is just a collection of other tokens for the transformer to riff on. The large majority of AI tools you would ever use will have what we call a system prompt. This is merely the first bit of text that gets prepended to the context before your actual prompt. It’s otherwise not special. Then there’s your actual prompt. In a minimal app, those two things could account for the whole context. A trivial but otherwise realistic example might consist of the system prompt “you’re a nice helpful robot who likes to answer questions." Plus the user prompt, "what is the meaning of life?” And then given that initial text, the transformer generates the next likely token, and the next, and the next, until the controller stops asking for more.

And that’s really it. There’s no magic in it. There never was. And no matter how fluent the response seems to be, the machine does not know the meaning of life. Everything it does is a lot of machine learning-derived statistical correlations on top of a little bit of normalization and explicit statistical analysis. You may be wondering how certain other features come into play. There are a couple that come up a lot. One is RAG, or retrieval augmented generation. This adds a step to perform a conventional search of the web or some other data source, and then load the results into the context before the transformer starts generating responses. Another is reasoning. Reasoning is not super well defined, but it generally depends on using two (or more) prompts to evaluate each other's work. It might use a crowd of invocations, plus a consensus mechanism to select the most semantically common response. Or it might use an adversarial invocation to rate the quality of the response from the first, and regenerate the response until the adversary deems it acceptable.

What does that mean for tools?

I am a software engineer, and so I’ll limit my conclusions to that domain. I suspect many of them would generalize, but not all, and not everywhere. And I’m not actually in a position to know the difference, which is a critical point in all of this.

The marketing positions AI tools as hypercompetent everything-machines that we mere humans must learn to control, lest they take over the world, or at least take our jobs. It’s not an environment that promotes nuanced critique. But I am confident that those claims, at least, are overblown. As I mentioned before, AI is itself more of a marketing term than a technical one. If nothing else, it’s a prepackaged collection of a large number of NLP and statistical techniques. And I think it’s uncontroversial to say that those techniques are overwhelmingly useful.

The kinds of assistance we were already getting from those techniques were very helpful, even before they all got subsumed into the AI moniker. I rely heavily on tool support as an engineer, and I use Jetbrains IDEs because I find them to be the best and most supportive tools. They provide syntactically aware code completion. They have excellent search, navigation, and refactoring support. Language servers and the language server protocol are near-miracles that have made code editing and maintenance dramatically better. I would love to have more tools like that. I dream about being able to ask my IDE where else in a code base some pattern is used. Or to have it indicate files that tend to change in tandem.

Thus far, I find AI code generation merely unimpressive. It just doesn't seem like much of an advancement over the normal tools I was already using every day. This covers narrowly scoped code generation and targeted refactoring. But a great deal of the broader social conversation centers on much larger scale code generation. This is the so-called patterns of vibe coding, or agentic generation. These patterns give me pause. That’s partly based on my understanding of how this all works, which I've just explained. And it's partly based on my understanding of the way human minds and brains work. The human element is an extremely broad topic, of course, and not one I’m qualified to wrap up in a 2 page summary like with NLP. But to pick out a couple of specific points that concern me, I worry about anchoring, and vigilance.

Anchoring

Anchoring is a phenomenon where people will get stuck and even become fixated on the first option they encounter. It’s really easy to trigger. And AI generated code snippets definitely have an anchoring effect on a programming session. Unfortunately, nowhere in the billions of parameters that make up AI models is a vector that captures correctness, or suitability to a given task. Those determinations are and will remain the responsibility of the programmer. That’s a complex and highly situational challenge. The anchoring effect of starting with an AI generated solution can present an additional obstacle to what is already one of the most critical activities we do in software development. That introduces a dynamic where the tools could make the easy parts easier, at the expense of making the hard parts harder. That seems like a misuse case, to me.

Vigilance

Vigilance means the same thing in this context as in colloquial speech. It’s remaining alert for potential hazards. And it’s really, really hard. It’s why you feel exhausted after a day of driving. Humans are just bad at this. So bad, in fact, that early humans may have domesticated wolves because it was easier than guarding against them. Large scale code generation creates the risk that programming becomes a task of vigilance, more than analysis, or problem solving, or whatever else. That’s particularly true with agentic code generation. My concern is workflows like that inhabit the intersection of the most serious weaknesses of AI tools, and the need for constant high vigilance that people are not able to sustain. Recently a new term has entered the discussion about AI coding: LLM burnout. This sounds to me like it's largely vigilance fatigue.

Takeaway

The products that we would think of today as “AI” are, at their core, performing natural language processing. The central concern of NLP is how similar some text documents are to a collection of other text documents. That is to say, how normal they are. As the processing becomes more extensive, it becomes able to tackle that concern with more granularity on more axes of normality.

Based on that, there is some easy, general purpose guidance to be had, but not much. Single purpose tools that offer to classify and analyze documents will give reliable and repeatable results. It’s useful to know whether some source file is like another. It’s useful to know the ways in which they differ. It’s useful to have semantically aware search and suggestions. I wish we had more tools in this vein. I wish we had detectors for duplicated behavior, the same way we have them for duplicated text. I wish we could rate code as to whether it’s functional vs declarative vs imperative. Or whether it’s actually self-documenting, as we like to tell ourselves. Transformer-driven text generators do something very much like those kinds of classification. But the output isn’t a score or a confidence interval, it’s a likely continuation of the text. That means we can’t use them to do the kind of second and third order analysis that we would assume.

Despite that limitation, the marketing and the default behavior of these chatbots is to skip straight to the end and have it produce─whole cloth─text resembling the higher order analysis. That's a problem. That result can't be trusted, but verifying the result is more work than doing the analysis in the first place. That's exhausting, and people can't sustain vigilant critical skepticism against what feels like a zip bomb targeting human attention. Even less so when the output is something they want.

There’s some irony that the tremendous scale of those transformer models makes them less trustworthy. Massive general purpose models include factors that represent correlations ranging from rhyming to sarcasm to object-orientedness and beyond. Single-purpose models would have clearer boundaries. A model that was built to operate on source code, using a token vocabulary based on nodes in an abstract syntax tree rather than natural language n-grams would be radically different. That single purpose syntax tree based model would be unable to get distracted by those natural language vectors which would be irrelevant to producing or analyzing source code. It would also be capable of failing to respond to a prompt. That may sound like a bad thing, but in conventional software it would be the difference between a clear error message and undefined behavior. This would clearly delineate appropriate uses for that tool. Some of what we’re all doing is feeling out the boundaries of where AI behavior becomes too undefined to be useful. It’s probably a good idea to consciously recognize that’s what we’re doing. And, where possible, we should seek out products that make this boundary explicit.